Section 1 – Why AI talent matching is colliding with MSP governance

Vendor Management Systems such as Beeline, SAP Fieldglass, and VNDLY were built to govern contingent talent, not to charm hiring managers. Yet AI talent matching engines are now quietly rewriting how every job is sourced, screened, and routed before the VMS even sees a requisition. That tension matters, because the MSP system owns rate cards, compliance attestations, and hiring decisions, while the AI agent increasingly owns the candidates pipeline, match scores, and outreach cadence.

In a typical MSP staffing program, the VMS enforces the rules of the game while suppliers compete to present the best candidate for each set of job requirements. Now autonomous agents, often powered talent tools built on machine learning models, are optimizing for supplier win rate, not for enterprise governance or long term career outcomes. When those agents control job titles, screening questions, and match score thresholds, they effectively become a shadow governance layer that no MSP steering committee ever approved.

Program owners feel the impact first in time to submit and in the quality of skills alignment for scarce profiles such as a senior software engineer. AI talent matching can surface ideal candidates in near real time, using data from résumés, assessments, and internal performance reviews to calculate nuanced match probabilities. Yet without explicit rules, the same matching engine can overfit to historical hiring patterns, amplifying hidden bias and narrowing the definition of top talent to whoever looked successful in the last project cycle.

MSP leaders cannot treat these AI systems as just another sourcing tool, because they reshape the entire process of talent acquisition. When an artificial intelligence agent rewrites a requisition, it may subtly change the job scope, alter required skill levels, or shift location expectations, which in turn affects pay bands and co-employment risk. That is not a minor tweak to a posting; it is a governance event that should be visible in the VMS audit trail and in every quarterly business review with your MSP.

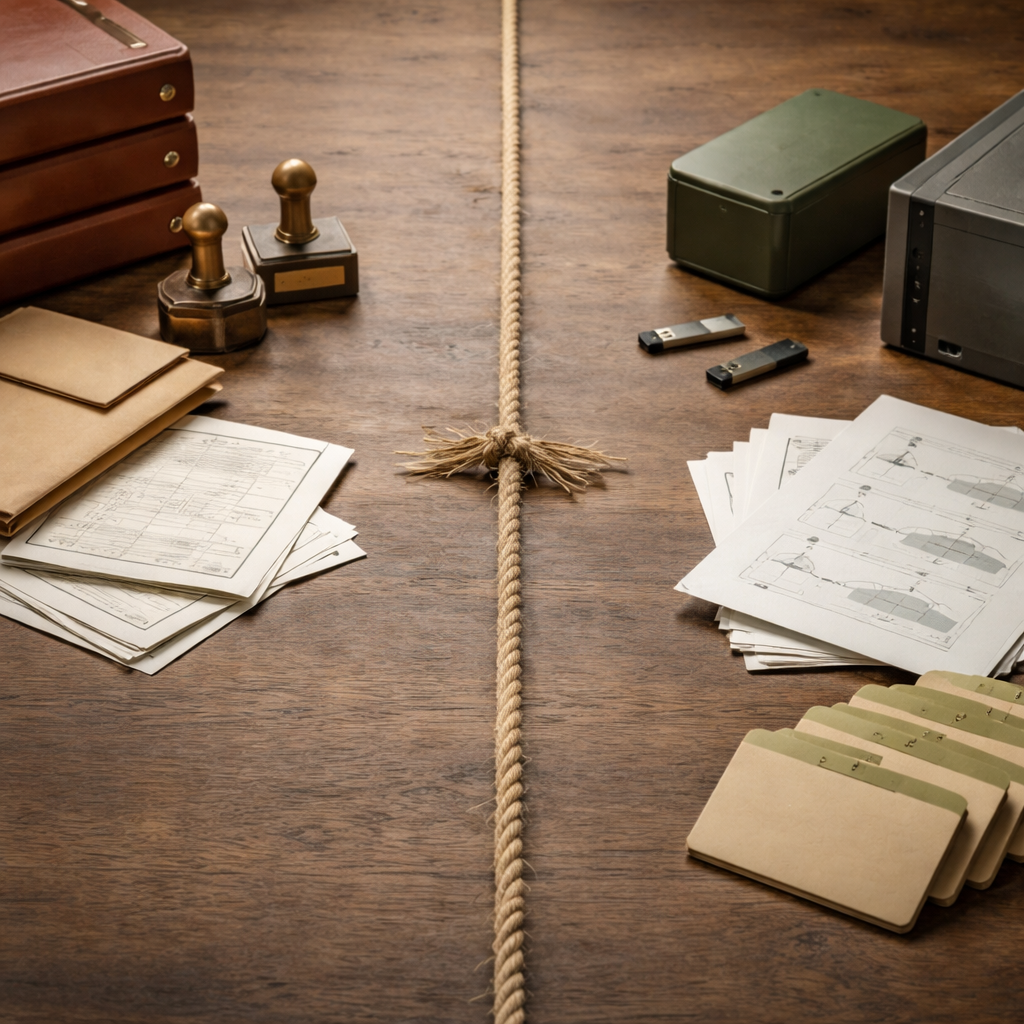

There is also a structural conflict of interest that HR and procurement leaders must name clearly. The VMS answers to the enterprise and to the MSP program office, while the AI agent usually answers to the staffing supplier’s revenue targets and to its own hiring manager relationships. Treating both layers as interchangeable technology is how program leaders accidentally hand control of hiring decisions to a vendor stack they do not own, do not configure, and often do not even see.

Section 2 – Drawing the functional line between VMS control and AI matching engines

To regain control, you need a crisp map of what the VMS owns versus what the AI talent matching system can influence. The VMS should remain the source of truth for rate cards, supplier tiers, time entry, invoicing, and compliance attestations, while AI matching engines focus on ranking candidates against clearly defined job requirements. Anything that changes scope, pay, or worker classification must stay inside the VMS and MSP governance workflow, not in a supplier’s internal systems.

On the AI side, it is reasonable to let models suggest better job titles, propose alternative skills combinations, or flag adjacent career paths that might yield ideal candidates. It is not reasonable to let those models trained on opaque data rewrite the requisition in ways that bypass your rate card, diversity goals, or co-employment guardrails. When an AI matching engine starts auto negotiating start dates or remote work terms, it is stepping into decision making territory that belongs to the enterprise, the MSP, and the accountable hiring managers.

Think of it this way: the VMS is the referee, while the AI agent is a very smart coach for one talent supplier. You want the coach to optimize outreach, screening, and match scores so that the supplier presents top talent quickly, especially for hard to fill software engineer roles. You do not want that coach to move the goalposts by redefining what a compliant job looks like or by sourcing outside your approved supplier network because its machine learning models think a different channel will yield a higher match score.

Modern MSP programs are already experimenting with more integrated architectures, including approaches similar to those described in independent analyses of next generation talent management systems. In these designs, AI talent matching is explicitly constrained by VMS rules, so that powered talent recommendations cannot violate rate cards or compliance workflows. The goal is not to slow down hiring; the goal is to ensure that every AI assisted hiring decision still aligns with the enterprise’s risk appetite and with the MSP’s contractual obligations.

Program owners should document this functional line in their MSP playbooks and in their supplier scorecards. For example, you might allow AI agents to propose ideal candidates in real time and to pre rank them using data driven match logic, while reserving final shortlisting to the VMS workflow where hiring managers and the MSP PMO can see every match score. That way, AI talent matching improves talent acquisition efficiency without quietly rewriting the rules of engagement behind the scenes.

Section 3 – Where AI talent matching breaks: bias, audit trails, and invisible spend

The first failure mode appears when AI agents start drafting or editing requisitions in ways that change the underlying job without any human noticing. A supplier side AI might shorten a software engineer description, drop a travel requirement, or tighten a skill list to mirror profiles that previously won offers, all to improve its own match scores. That looks like efficiency, but it also shifts risk, because the enterprise now owns a job posting that no one in HR, procurement, or the MSP actually approved.

The second failure mode is sourcing outside the agreed supplier network or geography because the matching engine is purely based on probability of match, not on your program design. An AI agent that scrapes public profiles to find ideal candidates may ignore your diversity goals, your pay equity commitments, or your data privacy standards. When that happens, the MSP’s carefully negotiated process for talent acquisition is replaced by a black box system that optimizes for speed and supplier margin, not for enterprise level compliance.

The third failure mode is bias and auditability. When an EEO investigator or the Department of Labor asks why a specific candidate was rejected for a job, you need an audit trail that explains the decision making logic, including any artificial intelligence models involved. If the only explanation lives in a supplier’s proprietary stack, you are effectively asking regulators to trust a machine learning narrative that you cannot independently verify, which is a weak position for any MSP governed program.

There is also the CFO angle; total AI spend inside contingent staffing is largely invisible today, spread across supplier invoices, embedded VMS features, and niche tools that support hiring managers. When you do not know how much you are paying for AI talent matching, you cannot evaluate its impact on fill rates, time to submit, or quality of fit for critical job titles. That opacity undermines the very reason you implemented an MSP and a VMS in the first place, which was to gain line of sight into every lever that shapes hiring decisions.

Program leaders should treat the recent wave of AI recruiting agents, including those highlighted in third party analyses of AI interview companion capabilities, as a prompt to harden their governance. Every supplier using AI talent matching should disclose which models trained on which categories of data, how match scores are calculated, and how bias is monitored over time. Without that transparency, you are effectively outsourcing not just sourcing, but also ethical judgment and regulatory exposure, to tools you did not select and cannot fully audit.

Section 4 – Contract language, pilot design, and a pragmatic middle path

Contingent workforce leaders do not need another abstract AI strategy; they need specific levers in their MSP contracts and VMS configurations. Start with model disclosure clauses that require every supplier to list the AI systems, matching engines, and artificial intelligence services they use in talent acquisition for your job families. Tie that disclosure to a right to audit, so you can review data categories, models trained, and match logic when regulators or hiring managers raise questions.

Next, address data privacy and ownership directly in the statement of work. Specify that internal worker data, such as performance reviews or skills assessments, may be used for AI talent matching only within your enterprise and MSP ecosystem, not to train generic powered talent products. Require suppliers to maintain audit logs for every AI influenced hiring decision, including the match score, the job requirements used, and any automated screening questions that might introduce bias against protected groups.

Then design a narrow pilot instead of a big bang rollout. Choose one supplier, one requisition family such as software engineer roles, and one region where your MSP already has strong talent coverage and reliable hiring manager engagement. Configure the VMS so that AI generated match scores appear as a field in the requisition, visible to hiring managers and the MSP PMO, but not allowed to auto reject any candidate without human review.

Use that pilot to test specific hypotheses about time to submit, quality of fit, and diversity of candidates across key job titles. Compare AI assisted submittals against a control group that uses traditional sourcing, and track whether the matching engine is surfacing genuinely new top talent or simply reordering the same profiles. If the pilot shows that AI talent matching improves career outcomes for workers and business outcomes for hiring managers, you can scale with confidence and with clear guardrails.

Finally, remember that MSP staffing is not just a technology stack; it is a governance model that spans HR, procurement, finance, and line leaders. When you integrate AI talent matching into that model, you should also revisit how regional staffing partners, such as those described in independent analyses of MSP staffing and workforce solutions, align their own AI tools with your enterprise standards. The real test of maturity is whether your program can explain, in plain language, why a specific candidate was matched to a specific job at a specific time — not just to a regulator, but to the worker whose career depends on that hiring decision.

Key figures on AI talent matching in MSP staffing

- Industry research has projected that more than half of talent leaders plan to integrate autonomous AI agents into their recruiting workflows within the next planning cycle, signalling that AI talent matching will rapidly become a standard expectation in MSP programs rather than an experimental add on. Program leaders should consult the latest market guides or HCM research notes from firms such as Gartner to validate the current percentage for their region and industry.

- Major talent platforms such as Eightfold, Paradox, and Phenom have each released significant updates to their AI agents across the interview and screening journey, which means MSP leaders must now assume that many suppliers are already using AI driven matching engines even when contracts do not explicitly mention them. Vendor release notes and product briefings provide the most up to date detail on specific capabilities.

- Beeline’s acquisition of MBO Partners, announced in 2022, illustrates how VMS providers are expanding into Agent of Record and Employer of Record territory, tightening the link between governance systems and the AI tools that influence hiring decisions for contingent talent. Public press releases from both organizations describe the scope and timing of this deal.

- Research from Korn Ferry has indicated that a large majority of talent acquisition leaders rank critical thinking and problem solving as top recruiting priorities, often above AI specific skills, underscoring that human oversight of AI talent matching remains essential for ethical and compliant MSP staffing. Because Korn Ferry periodically refreshes these surveys, program leaders should reference the latest published report for precise percentages.